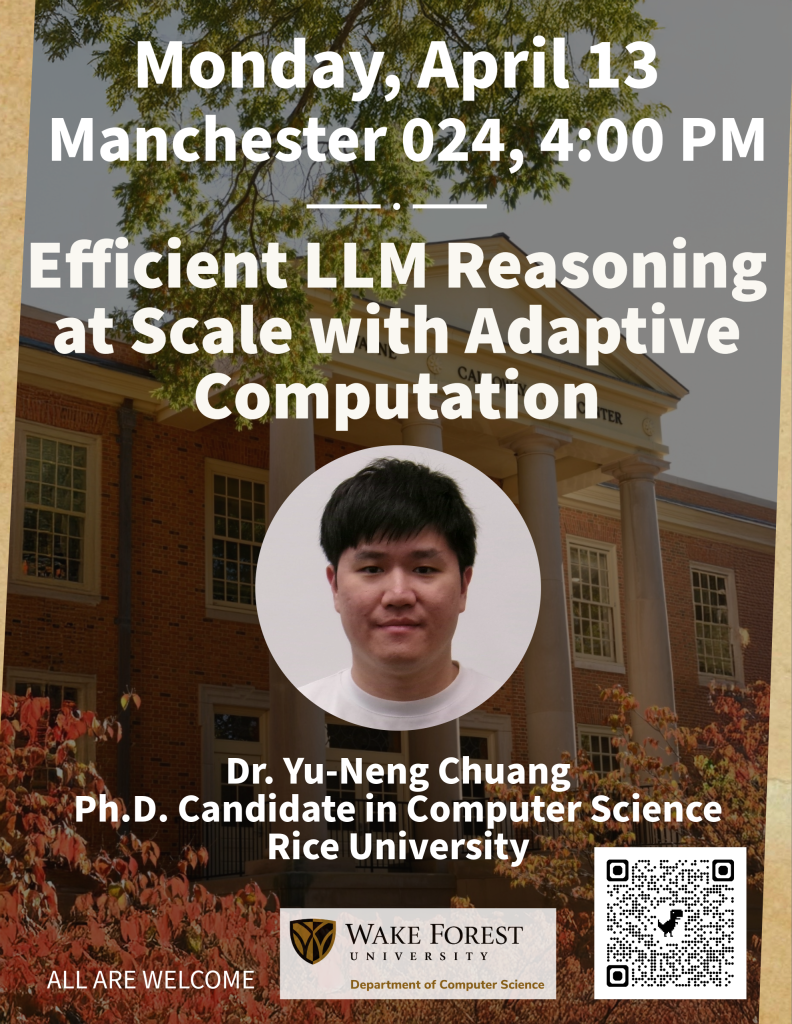

Efficient LLM Reasoning at Scale with Adaptive Computation by Dr. Yu-Neng Chuang

Join us Monday, April 13, at 4:00 PM in Manc 024! Dr. Yu-Neng Chuang from Rice University will share his research titled, “Efficient LLM Reasoning at Scale with Adaptive Computation“.

Title: Efficient LLM Reasoning at Scale with Adaptive Computation

Abstract:The increasing reliance on Large Language Models (LLMs) for complex reasoning tasks has intensified the latency and cost challenges of deploying LLMs at scale. There are three distinct bottlenecks driven by the unconditional use of LLM reasoning: computational waste from redundant input contexts, overallocation of massive LLMs to simple prompts, and latency induced by unnecessary “overthinking” during long output generation.

To address these challenges, we argue that LLM reasoning should be treated as an adaptive, conditional resource rather than a static, computationally expensive default of the reasoning pipeline. Building on this principle, we present an efficiency-aware framework that systematically optimizes reasoning across the input, model, and output stages. First, we propose prompt compression techniques that reduce input context length while preserving task-relevant signals, significantly reducing prefixing costs. Second, we introduce a “System 1” to “System 2” routing mechanism that aligns model confidence with correctness, dynamically directing simpler queries to smaller, faster models and reserving larger, more powerful models for complex inputs. Third, we develop an adaptive reasoning approach that limits the length and complexity of LLM outputs, using multi-step reasoning only when warranted and relying on lightweight alternatives for simpler cases.

Beyond these methods, I will also share perspectives on emerging trends in LLM reasoning, highlighting how adaptive and resource-aware paradigms may shape the next generation of scalable LLM systems and applications.

Bio: Yu-Neng (Allen) Chuang received his Ph.D. in Computer Science at Rice University, advised by Dr. Xia (Ben) Hu and Dr. Vladimir Braverman. His research focuses on building efficient and reliable large language model (LLM) systems, with an emphasis on LLM post-training and agentic frameworks. He has published extensively in leading AI and machine learning venues, including ICML, ICLR, and NeurIPS. Besides, in industries, he has previously interned with Google DeepMind, Apple Siri, and Samsung Research America. His research has widely applied to real-world industrial pipelines and prototypes, including Google DeepMind, Meta AI, and Samsung.

All are welcome!