Master’s Thesis Defenses / Project Presentations

During the week of April 13, we will have six CS MS students ready to share their findings after months of deep dives and hard work. We invite you to celebrate their hard work and academic achievements with us.

Tuesday, April 14 I 11:00 AM I Manc 241

Title: Development of a Request Management System for SOFO

Abstract: The Student Organization Finance Office (SOFO) at Wake Forest University processes a considerable amount of funding requests each semester through a spreadsheet based system that lacks scalability, data integrity, and management efficiency. This project presents the design and development of a request management system aimed to replace the existing workflow with a structured and secure platform. The system integrates with Qualtrics, the platform used for submitting SOFO funding request forms, to automatically ingest submitted data. It also provides an interface for staff to review, approve, and track requests while maintaining related organizational data. The resulting platform improves efficiency, enhances data reliability, and provides a scalable foundation for managing student organization funding processes.

Tuesday, April 14 I 2:00 PM I Manc 108

Title: Design and Implementation of an Interactive Process Scheduling Simulator

Abstract: An interactive process scheduling simulation application was designed and implemented for iPadOS. The system models operating system scheduling behavior through a modular, event-driven simulation engine integrated with a responsive user interface. The program represents processes as structured objects and manages their execution using a centralized event queue, where events are generated, prioritized, and executed according to a deterministic algorithm. The main simulation loop advances time, processes events, updates system state, and recalculates future events, enabling accurate modeling of multiple scheduling algorithms including First Come First Serve, Priority (preemptive and non-preemptive), Round Robin, and Shortest Job Next. The design follows a Model-View-ViewModel (MVVM) architecture, separating simulation logic and the user interface. All core data structures and scheduling logic reside within the view model, which serves as the single source of truth and exposes reactive state updates to the SwiftUI-based interface. The simulation operates on a dedicated background thread for continuous execution, while also supporting a step-based mode for discrete event inspection. User interaction is facilitated through a interface that allows parameter adjustment, file input handling, simulation control, and real-time visualization of process state transitions. Special emphasis is placed on synchronization between the simulation engine and animation system, ensuring that events are serialized visually even when occurring at the same simulation time. Additionally, robust input validation, error handling, and dynamic parameter configuration enhance system reliability and usability. Overall, the program demonstrates a cohesive integration of scheduling logic with interactive visualization, providing both an accurate simulation tool and an educational platform for understanding process scheduling.

Tuesday, April 14 I 5:15 PM I Virtual

Title: Interpretable Safe Reinforcement Learning for Single And Multi Agent Systems

Abstract: This thesis studies how to make reinforcement learning safe and interpretable in single-agent and multi-agent settings, motivated by the limitations of existing explainable reinforcement learning methods for explaining safety constraints and failure propagation. It develops a unified framework for single-agent reinforcement learning that combines local task and risk critics with a global abstract policy graph to explain behavior, identify adversarial vulnerabilities, support formal verification, guide falsification, and enable runtime shielding without retraining. Experiments on MuJoCo navigation tasks and a Type 1 diabetes dosing environment show that the explanation framework improves user understanding, discovers substantially more safety violations than standalone model checking and baseline falsifiers, and reduces unsafe behavior through shielding, although shielding does not provide a complete guarantee. For multi-agent reinforcement learning, the thesis introduces cross-Hessian-based coupling analysis and a two-stage failure-forensics framework to identify the true failure source, distinguish downstream-first anomalies from root causes, and trace failure propagation through interpretable contagion graphs. Results across MPE and SMAC settings show that the method captures local interaction structure and supports accurate Patient-0 identification. Overall, this thesis demonstrates that interpretability can function as a practical foundation for safety assurance, enabling explanation, debugging, verification, and targeted intervention in reinforcement learning systems.

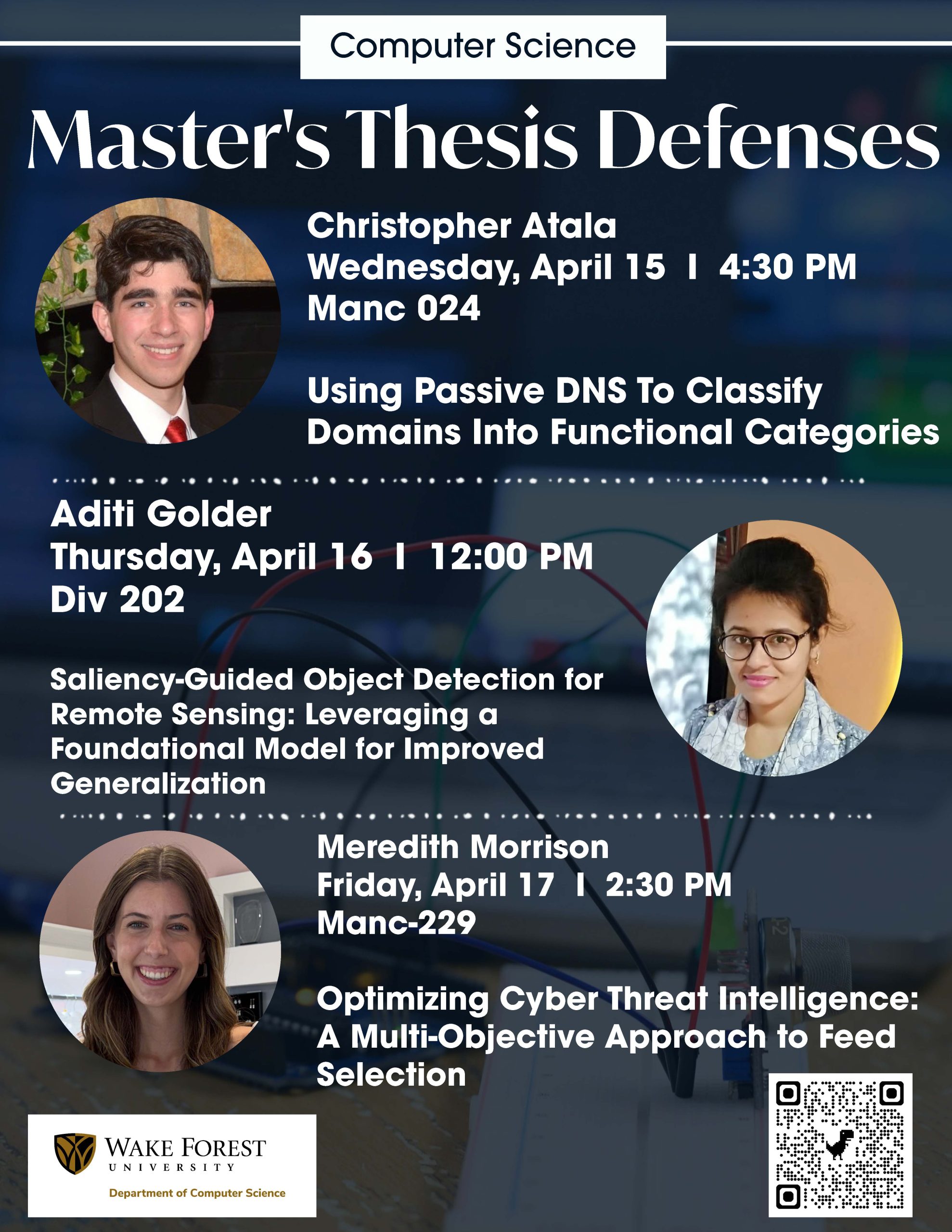

Wednesday, April 15 I 4:30 PM I Manc 024

Title: Using Passive DNS To Classify Domains Into FunctinalCategories

Abstract:

The rapid and unending expansion of the Internet continues to lead to the creation of millions of domains that serve a wide range of purposes. Accurately classifying domains by their function is essential for cybersecurity applications such as content filtering, compliance enforcement, and network monitoring. However, existing classification methods often rely on manual labeling, proprietary datasets, or real-time observations that are difficult to scale and lack reproducibility.

This research presents a reproducible, data-driven methodology for functional domain classification using passive DNS (pDNS) data from DomainTools’ Farsight DNSDB [12]. To capture patterns in domain behavior and structural evolution over time, the approach extracts features across four dimensions: lexical characteristics, subdomain structure and temporal dynamics, DNS records and services, and infrastructure and network attributes. These features are combined with additional data sources like GeoIP and Autonomous System metadata from the MaxMind GeoIP dataset [8] to train supervised machine learning models.

The resulting framework shows promise in classification accuracy across three functionally distinct categories: university, government, and shopping. This methodology supports both academic research and operational cybersecurity applications.

Thursday, April 16 I 12:00 PM I Div 202

Title:

Saliency-Guided Object Detection for Remote Sensing: Leveraging a Foundational Model for Improved Generalization

Abstract:

Remote sensing object detection remains challenging because target objects are often small, densely distributed, and embedded in visually complex backgrounds. In such settings, conventional bounding-box supervision provides only coarse spatial guidance and may allow detectors to rely on contextual background cues rather than object-specific evidence, limiting robustness and cross-domain generalization. This dissertation addresses that limitation by introducing a saliency-guided training framework that converts saliency from a post-hoc interpretability tool into an explicit supervisory signal for object detection.

The proposed framework is built on DEIM-D-FINE and integrates three components during training: Grad-CAM-based saliency maps extracted from the detector backbone, SAM2-generated object masks derived from bounding-box prompts, and a Dice-based saliency alignment loss combined with the original detection objective. Two alignment strategies are investigated: upsampling the Grad-CAM map to image resolution and downsampling the SAM2 mask to the native saliency resolution. Experiments are conducted on FCAT tropical-forest palm imagery, the PASCAL VOC 2012 benchmark, and a cross-region transfer setting from Ecuador to Iquitos. Performance is evaluated using AP@50, AP@75, and AP@50:95, together with quantitative saliency-mask alignment analysis.

Across all evaluated DIEM-D-FINE variants, saliency-guided training consistently improves detection performance over the baseline. The downsampling strategy yields the strongest and most stable gains, improving D-FINE-L on FCAT-6 from 79.83 to 85.55 AP@50 and from 56.02 to 60.23 AP@50:95, and improving D-FINE-X on PASCAL VOC from 81.92 to 88.34 AP@50 and from 66.46 to 71.83 AP@50:95. In cross-region evaluation, D-FINE-X improves from 41.50 to 47.11 AP@50 and from 22.10 to 25.05 AP@50:95. Saliency analysis further shows substantially better object alignment, with Coverage and IoU increasing from 37.34% and 26.15% in the baseline to 65.16% and 56.42% under downsampling. Ablation studies identify α = 0.5 as the best trade-off between detection and saliency supervision.

These findings show that foundation-model-generated masks can provide effective additional spatial supervision for object detection and that explicitly aligning detector saliency with object regions encourages more object-centered representations. Overall, the dissertation demonstrates that saliency-guided learning is a practical and effective strategy for improving localization quality, reducing reliance on background-correlated cues, and strengthening generalization in remote sensing object detection.

Friday, April 17 I 2:30 PM I Manc 229

Title: Optimizing Cyber Threat Intelligence: A Multi-Objective Approach to Feed Selection

Abstract:

In modern cybersecurity operations, threat intelligence feeds provide critical Indicators of Compromise (IoCs) to track malicious activity. However, organizations often ingest multiple overlapping feeds from various Cyber Threat Intelligence (CTI) providers, leading to redundant data, irrelevant noise, and increased false positives. This data overload severely strains both automated detection systems and human analysts. To mitigate this issue, this thesis introduces an NP-Hard, multi-objective optimization challenge aimed at selecting a minimal subset of feeds that guarantees complete coverage of required IoCs while minimizing unneeded noise and feed ingestion costs.

Evaluating a range of solution strategies, this research introduces localized greedy heuristics and precision-aware variants, exact numerical optimization, and scalable evolutionary search to address the defined feed selection problem. Furthermore, a problem-space analysis using XGBoost predictive modeling helps identify the underlying data characteristics that dictate algorithmic success. These findings demonstrate that algorithm performance is driven by a small number of structural properties, most notably forced constraints, overlap patterns, and precision variance. Ultimately, while exact solvers like Integer Linear Programming achieve unmatched mathematical optimality on small, constrained datasets, evolutionary algorithms like NSGA-II provide the greatest overall robustness and scalability for navigating complex, highly redundant real-world datasets. Together, these insights offer actionable strategies to mitigate data overload and prioritize efficient, high-quality feed selection.

All are welcome!